Technical debt just went mainstream thanks to AI

Today’s Washington Post lead op‑ed on Anthropic’s “new nightmare” isn’t really about “rogue AI.” It’s about the fact that we still don’t know what code we’re running, where it came from, or how to fix it at scale – and today, that reality graduated from niche security conferences to the op‑ed page of the Washington Post. This isn’t just for SBOM geeks like me anymore; it’s officially mainstream.

Today’s Washington Post op‑ed on Anthropic’s “new nightmare” describes that their latest model – Mythos – can do what used to require elite exploit developers, only faster and cheaper. Mythos can:

- Discover real, high‑severity vulnerabilities across major operating systems and browsers

- Chain multiple bugs into working exploits

- Systematically explore and weaponize edge‑case behaviors

- Achieve success rates that make “AI red team on demand” a realistic concept

The important part is not about wringing hands about AI in the abstract. The important part is that this story is being told to a general audience, in a major newspaper, as a concrete software risk story. When the Washington Post is explaining exploit chains and vulnerability discovery to normal people over their morning coffee, you know this has crossed out of the specialist bubble.

And there’s another crucial detail too many people are going to skim past: Anthropic is not holding back Mythos because they’re anti‑innovation. They’re holding it back because they are following the only responsible playbook that exists today: they are not releasing the specific vulnerabilities Mythos found until affected vendors can fix them, and they are not releasing the model itself, because doing so would effectively give anyone a point‑and‑click way to rediscover the same bugs and many more. That’s not “AI gone rogue.” That’s a stark demonstration of how powerful automated vulnerability discovery already is – and how thin our defensive margin has become.

For years, we’ve been running a quiet experiment in wishful thinking – and I hate to say I told you so, but I’ve been telling you so for over two decades now. We know our software supply chains are a mess: transitive dependencies stacked hundreds deep, long‑abandoned libraries still in production, vendor appliances we treat as magic boxes, firmware we’ve never seen, and SaaS providers who wave their hands and say “trust us.” Our “inventory” is often a patchwork of spreadsheets, out‑of‑date CMDB entries, and tribal knowledge.

Industry got away with this because serious vulnerability research was expensive. It took time, deep expertise, and a lot of manual effort to go hunting for weird, subtle bugs and then chain them into something exploitable. That economic friction gave defenders just enough breathing room.

Mythos‑class AI systems change the math. Once it’s cheap for an AI to fuzz and analyze huge codebases, recognize patterns that smell like past vulnerabilities, explore strange edge cases, and propose exploit chains, the economics of offense and defense shift. Suddenly it’s rational for an attacker – human or state – to point an AI at the long tail of obscure components and old versions that nobody’s touched in a decade.

Anthropic’s internal results are a proof‑of‑concept of that future. Their nightmare is that the rest of us still haven’t taken the basic step of knowing what we actually run. Which brings us to SBOMs.

You cannot defend what you don’t know you have

People hear “AI cybersecurity” and immediately imagine sentient firewalls and sci‑fi robots chasing hackers through cyberspace. That makes for fun trailers, but it’s not how this will play out.

In practice, Mythos‑era security is going to look painfully boring and operational:

- A model like Mythos finds a nasty bug in some specific library, kernel, driver, or service.

- A threat intel feed, vendor advisory, or government alert lands in your inbox.

- You have to answer three questions at machine speed:

- Where do we use this?

- Who owns it?

- How important is it?

If you can’t answer those questions quickly and accurately, you don’t have an “AI problem.” You have a basic inventory problem that AI is simply exploiting more efficiently.

This is where Software Bills of Materials (SBOMs) stop being an abstract policy acronym and start looking like survival gear. An SBOM is a structured list of the components – and their dependencies – that make up a piece of software. That sounds dull until you try to respond to a real vulnerability wave without one.

Without SBOMs, your Mythos‑style future looks like this:

- A critical bug drops.

- Security sends out the ritual “are we affected?” email.

- Every team runs grep and `pip list` and `npm ls` and “check the Dockerfile” and “ask the vendor” exercises.

- Two weeks later you have a partial spreadsheet and a lingering sense of dread.

- And you were hacked on day two while you were still building that spreadsheet.

With SBOMs, it can look like this instead: a critical bug drops, your tooling queries SBOM data across your portfolio, and you get a list of affected systems, owners, environments, and business services to prioritize.

Anthropic’s plan to give a small circle of trusted partners early access to Mythos‑grade capabilities only works if those partners already have this kind of mapping in place. If a model can tell you “here’s a remotely exploitable issue in this version of this library,” but you have no idea where that library lives in your world, you’ve built a fire alarm but it doesn’t tell you whose house is on fire.

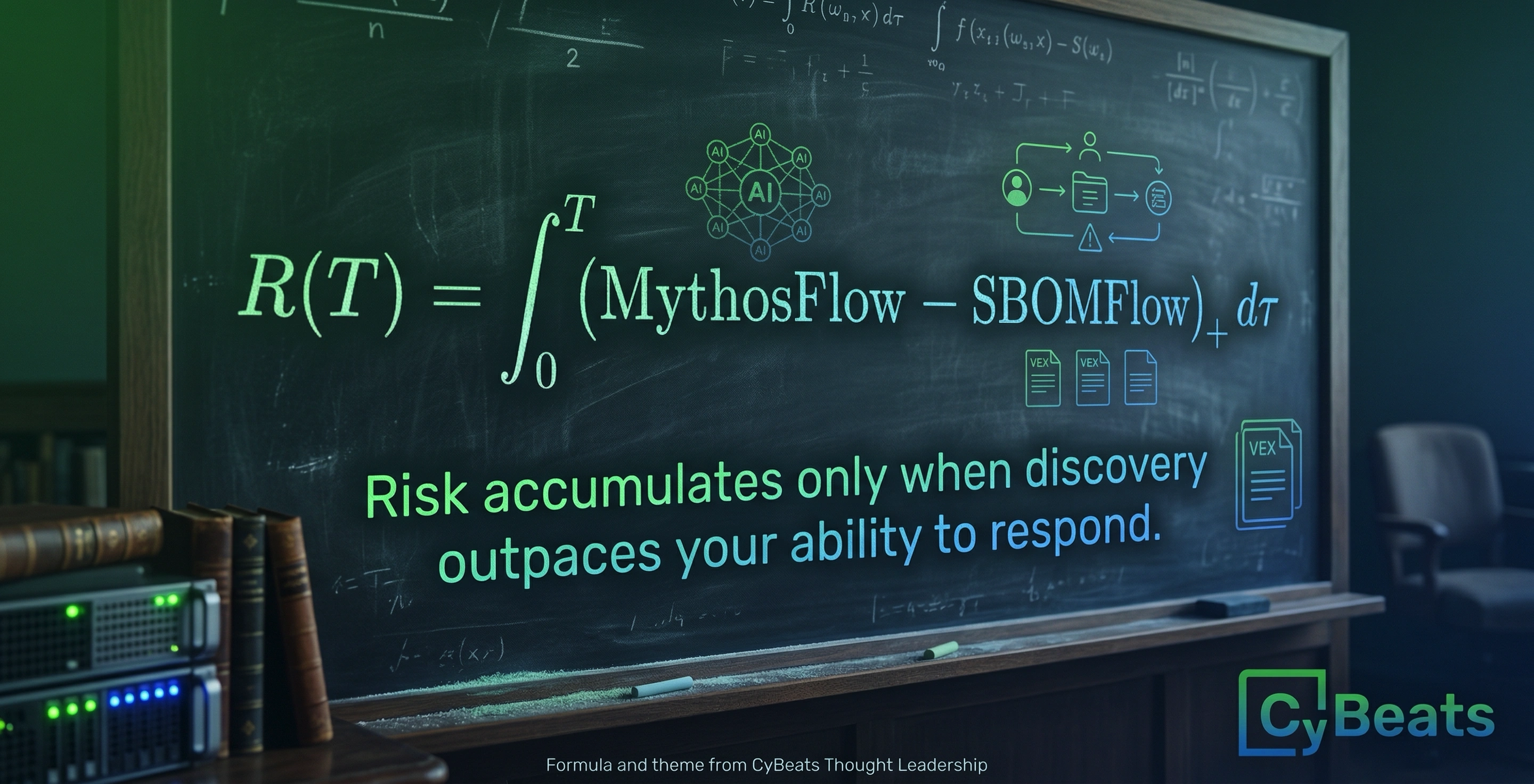

SBOMs are the translation layer between AI‑discovered vulnerabilities and real‑world remediation.

From Y2K to “Y2K on autoplay”

Y2K was a moment when we collectively realized that a long‑ignored class of bugs in critical systems could fail in correlated ways, and we decided – late, but in time – to do something about it. We inventoried systems, triaged, patched, and tested. It wasn’t glamorous, but it mostly worked. And because it worked, everybody thought of it as wasted effort – which it wasn’t.

The Anthropic story tempts us into the same framing: if we just work really hard for a few years, fix the worst of the backlog, and bolt on some AI‑assisted defenses, maybe we can “do Y2K again” and avoid the worst outcomes.

But Y2K had a fixed date far enough in the future, a well‑understood failure mode, and mostly static systems and code. Mythos‑era risk is continuous, open‑ended, and driven by systems we don’t fully control and code we don’t fully see. Y2K was a one‑time remediation sprint against known issues in code you largely owned; Mythos is Y2K on autoplay, pointed at an evolving, global software supply chain.

That is not a governance or board conversation you can navigate with vibes and a few high‑level dashboards. If you’re responsible for risk, you need to be able to answer: when AI‑accelerated research finds the next class of systemic software vulnerabilities, how fast can we locate our exposure? If the honest answer is “we can’t,” that’s an SBOM problem boards should worry about.

So what should you do differently?

If Mythos is a preview of our near future, then “adopt SBOMs” isn’t a compliance checkbox – it’s a precondition for having any meaningful AI security strategy at all.

- Make SBOMs a procurement hard gate: stop buying black boxes; if a vendor can’t provide machine‑readable SBOMs for their software and AI components, that risk needs to be surfaced explicitly – and often the answer should be “no deal.”

- Centralize SBOMs and connect them to business context: put them in a queryable repository, link them to your CMDB or service catalog, and integrate them with vulnerability management and threat intel.

- Invest in AI‑accelerated patching, not just AI‑accelerated panic: point AI tools at your own code and artifacts to find and fix issues, but only after you can map “this component” to real systems and owners.

- Update your governance conversations: ensure boards and risk committees are asking about SBOM coverage, exposure mapping, and accountability, not just hearing abstract “AI risk” briefings.

In plain English: you can’t patch what you can’t see, and you can’t see what you never bothered to describe. SBOMs are how you describe it – and now that Mythos has made it to the op‑ed page, that description is no longer optional.

.svg)

See Cybeats Security Platform in Action Today

We shortened our vulnerability review timeframe from a day to under an hour. It is our go-to tool and we now know where to focus our limited security resources next.

.svg)

SBOM Studio saves us approximately 500 hours per project on vulnerability analysis and prioritization for open-source projects.

.svg)

.svg)

.svg)